The future of genetic engineering at the moment is a mystery to everyone. The concept of reprogramming life is an oh-so-cool idea, but it is mostly being used nowadays in the most sophisticated labs. How will genetic engineering change in the future, though? Who will use it? And how?

In an attempt to provide a starting point to a discussion, I’ve analyzed the issue according to Daniel Burrus’ “Eight Pathways of Technological Advancement”, found in his book Flash Foresight. While the book provides more insights about creativity and business skills than about foresight, it does contain some interesting gems like the Eight Pathways. I’ve led workshops in the past, where I taught chief executives how to use this methodology to gain insights about the future of their products, and it had been a great success. So in this post we’ll try applying it for genetic engineering – and we’ll see what comes out.

Eight Pathways of Technological Advancement

Make no mistake: technology does not “want” to advance or to improve. There is no law of nature dictating that technology will advance, or in what direction. Human beings improve technology, generation after generation, to better solve their problems and make their lives easier. Since we roughly understand humans and their needs and wants, we can often identify how technologies will improve in order to answer those needs. The Eight Pathways of Technological Advancement, therefore, are generally those that adapt technology to our needs.

Let’s go briefly over the pathways, one by one. If you want a better understanding and more elaborate explanations, I suggest you read the full Flash Foresight book.

First Pathway: Dematerialization

By dematerialization we mean literally to remove atoms from the product, leading directly to its miniaturization. Cellular phones, for example, have become much smaller over the years, as did computers, data storage devices and generally any tool that humans wanted to make more efficient.

Of course, not every product undergoes dematerialization. Even if we were to minimize cars’ engines, they would still stay large enough to hold at least one passenger comfortably. So we need to take into account that the device should still be able to fulfil its original purpose.

Second Pathway: Virtualization

Virtualization means that we take certain processes and products that currently exist or are being conducted in the physical world, and transfer them fully or partially into the virtual world. In the virtual world, processes are generally streamlined, and products have almost no cost. For example, modern car companies take as little as 12 months to release a new car model to market. How can engineers complete the design, modeling and safety testing of such complicated models in less than a year? They’re simply using virtualized simulation and modeling tools to design the cars, up to the point when they’re crashing virtual cars with virtual crash dummies in them into virtual walls to gain insights about their (physical) safety.

Third Pathway: Mobility

Human beings invent technology to help them fulfill certain needs and take care of their woes. Once that technology is invented, it’s obvious that they would like to enjoy it everywhere they go, at any time. That is why technologies become more mobile as the years go by: in the past, people could only speak on the phone from the post office; today, wireless phones can be used anywhere, anytime. Similarly, cloud computing enables us to work on every computer as though it were our own, by utilizing cloud applications like Gmail, Dropbox, and others.

Fourth Pathway: Product Intelligence

This pathway does not need much of an explanation: we experience its results every day. Whenever our GPS navigation system speaks up in our car, we are reminded of the artificial intelligence engines that help us in our lives. As Kevin Kelly wrote in his WIRED piece in 2014 – “There is almost nothing we can think of that cannot be made new, different, or interesting by infusing it with some extra IQ.”

Fifth Pathway: Networking

The power of networking – connecting between people and items – becomes clear in our modern age: Napster was the result of networking; torrents are the result of networking; even bitcoin and blockchain technology are manifestations of networking. Since products and services can gain so much from being connected between users, many of them take this pathway into the future.

Sixth Pathway: Interactivity

As products gain intelligence of their own, they also become more interactive. Google completes our search phrases for us; Amazon is suggesting for us the products we should desire according to our past purchases. These service providers are interacting with us automatically, to provide a better service for the individual, instead of catering to some averaging of the masses.

Seventh Pathway: Globalization

Networking means that we can make connections all over the world, and as a result – products and services become global. Crowdfunding firms like Kickstarter, that suddenly enable local businesses to gain support from the global community, are a great example for globalization. Small firms can find themselves capable of catering to a global market thanks to improvements in mail delivery systems – like a company that delivers socks monthly – and that is another example of globalization.

Eighth Pathway: Convergence

Industries are converging, and so are services and products. The iPhone is a convergence of a cellular phone, a computer, a touch screen, a GPS receiver, a camera, and several other products that have come together to create a unique device. Similarly, modern aerial drones could also be considered a result of the convergence pathway: a camera, a GPS receiver, an inertia measurement unit, and a few propellers to carry the entire unit in the air. All of the above are useful on their own, but together they create a product that is much more than the sum of their parts.

How could genetic engineering progress along the Eight Pathways of technological improvement?

Pathways for Genetic Engineering

First, it’s safe to assume that genetic engineering as a practice would require less space and tools to conduct (Dematerializing genetic engineering). That is hardly surprising, since biotechnology companies are constantly releasing new kits and appliances that streamline, simplify and add efficiency to lab work. This criteria also answers the need for mobility (the third pathway), since it means complicated procedures could be performed outside the top universities and labs.

As part of streamlining the work process of genetic engineers, some elements would be virtualized. As a matter of fact, the Virtualization of genetic engineering has been taking place over the past two decades, with scientists ordering DNA and RNA codes from the internet, and browsing over virtual genomic databases like NCBI and UCSC. The next step of virtualization seems to be occurring right now, with companies like Genome Compiler creating ‘browsers’ for the genome, with bright colors and easily understandable explanations that reduce the level of skill needed to plan an experiment involving genetic engineering.

How can we apply the pathway of Product Intelligence to genetic engineering? Quite easily: virtual platforms for designing genetic engineering experiments will involve AI engines that will aid the experimenter with his task. The AI assistant will understand what the experimenter wants to do, suggest ways, methodologies and DNA sequences that will help him accomplish it, and possibly even – in a decade or two – conduct the experiment automatically. Obviously, that also answers the criteria of Interactivity.

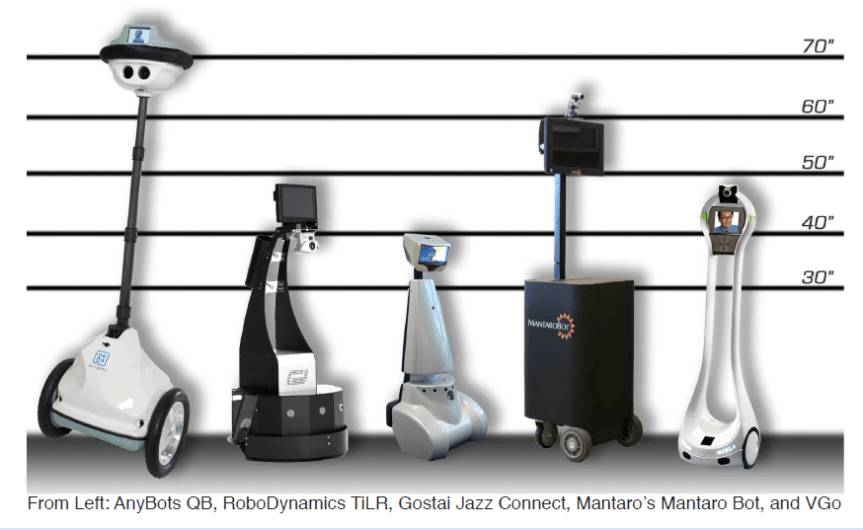

If this described future sounds far-fetched, you should take into account that there are already lab robots conducting the most convoluted experiments, like Adam and Eve (see below). As the field of robotics makes strides forward, it is actually possible that we will see similar rudimentary robots working in makeshift biology Do-It-Yourself labs.

Networking and Globalization are essentially the same for the purposes of this discussion, and complement Virtualization nicely. Communities of biology enthusiasts are already forming all over the world, and they’re sharing their ideas and virtual schematics with each other. The iGEM (International Genetically Engineered Machines) annual competition is a good evidence for that: undergraduate students worldwide are taking part in this competition, designing parts of useful genetic code and sharing them freely with each other. That’s Networking and Globalization for sure.

Last but not least, we have Convergence – the convergence of processes, products and services into a single overarching system of genetic engineering.

Well, then, what would a convergence of all the above pathways look like?

The Convergence of Genetic Engineering

Taking together all of the pathways and converging them together leads us to a future in which genetic engineering can be performed by nearly anyone, at any place. The process of designing genetic engineering projects will be largely virtualized, and will be aided by artificial assistants and advisors. The actual genetic engineering will be conducted in sophisticated labs – as well as in makers’ houses, and in DIY enthusiasts’ kitchens. Ideas for new projects, and designs of successful past projects, will be shared on the internet. Parts of this vision – like virtualization of experiments – are happening right now. Other parts, like AI involvement, are still in the works.

What does this future mean for us? Well, it all depends on whether you’re optimistic or pessimistic. If you’re prone to pessimism, this future may look to you like a disaster waiting to happen. When teenagers and terrorists are capable of designing and creating deadly bacteria and viruses, the future of mankind is far from safe. If you’re an optimist, you could consider that as the power to re-engineer life comes down to the masses, innovations will rise everywhere. We will see glowing trees replacing lightbulbs in the streets, genetically engineered crops with better traits than ever before, and therapeutics (and drugs) being synthetized in human intestines. The truth, as usual, is somewhere in between – and we still have to discover it.

Conclusion

If you’ve been reading this blog for some time, you may have noticed a recurring pattern: I’ll be inquiring into a certain subject, and then analyzing it according to a certain foresight methodology. Such posts have covered so far the Business Theory of Disruption (used to analyze the future of collectible card games), Causal Layered Analysis (used to analyze the future of aerial drones and of medical mistakes) and Pace Layer Thinking. I hope to go on giving you some orderly and proven methodologies that help thinking about the future.

How you actually use these methodologies in your business, class or salon talk – well, that’s up to you.