History is a story that will never be told fully. So much of the information is lost to the past. So much – almost all – the information is gone, or has never been recorded. We can barely make sense of the present, in which information about the events and the people behind them keeps being released every day. What chance do we have, then, at fully deciphering the complex stories underlying history – the betrayals, the upheavals, the personal stories of the individuals who shaped events?

The answer has to be that we have no way of reaching any certainty about the stories we tell ourselves about our past.

But we do make some efforts.

Medical doctors and historians are trying to make sense of biographies and ancient skeletons, in order to retro-diagnose ancient kings and queens. Occasionally they identify diseases and disorders that were unknown and misunderstood at the time those individuals actually lived. Mummies of ancient pharaohs are x-rayed, and we suddenly have a better understanding of a story that unfolded more than two thousand years ago and realize that the pharaoh Ramesses II suffered from a degenerative spinal condition.

Similarly, geneticists and microbiologists use DNA evidence to end mysteries and find conclusive endings to some historical stories. DNA evidence from bones has allowed us to put to rest the rumors, for example, that the two children of Czar Nicholas II survived the 1918 revolution in Russia.

The above examples have something in common: they all require hard work by human experts. The experts need to pore over ancient histories, analyze the data and the evidence, and at the same time have good understanding of the science and medicine of the present.

What happens, though, when we let a computer perform similar analyses in an automatic fashion? How many stories about the past could we resolve then?

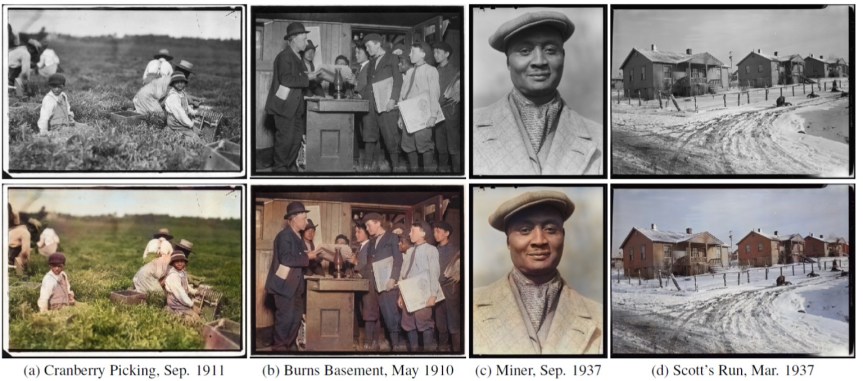

We are rapidly making progress towards such achievements. Recently, three authors from Waseda University in Japan have published a new paper showing they can use a computer to colorize old black & white photos. They rely on convolutional neural networks, which are in effect a simulation of certain structures of a biological brain. Convolutional neural networks have a strong capacity for learning, and can thus be trained to perform certain cognitive tasks – like adding color to old photos. While computerized coloring has been developed and used before, the authors’ methodology seems to achieve better results than others before them, with 92.6 percent of the colored images looking natural to users.

This is essentially an expert system, an AI engine operating in a way similar to that of the human brain. It studies thousands of thousands of pictures, and then applies its insights to new pictures. Moreover, the system can now go autonomously over every picture ever taken, and add a new layer of information to it.

There are boundaries to the method, of course. Even the best AI engine can miss its mark in cases where the existing information is not sufficient to produce a reliable insight. In the examples below you can see that the AI colored the tent orange rather than blue, since it had no way of knowing what color it was originally.

But will that stay the case forever?

As I previously discussed in the Failures of Foresight series of posts on this blog, the Failure of Segregation is making it difficult for us to forecast the future because we’re trying to look at each trend and each piece of evidence on its own. Let’s try to work past that failure, and instead consider what happens when an AI expert coloring system is combined with an AI system that recognizes items like tents and associates them with certain brands, and can even analyze how many tents of each color of that brand were sold on every year – or at least what was the most favorite tent color for people at that time.

When you combine all of those AI engines together, you get a machine that can tell you a highly nuanced story about the past. Much of it is guesswork, obviously, but those are quite educated guesses.

The Artificial Exploration of the Past

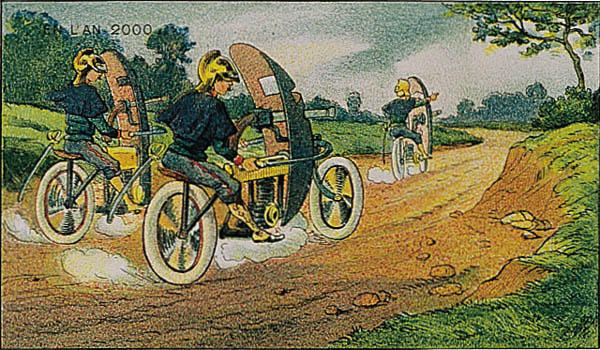

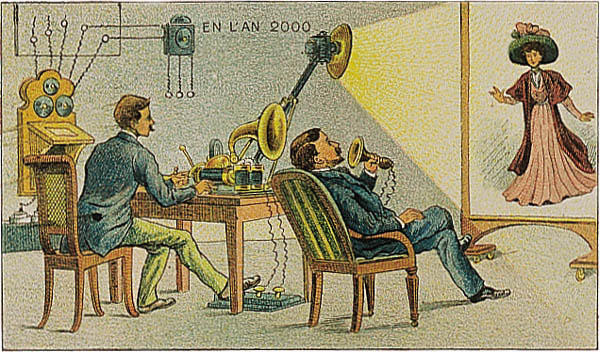

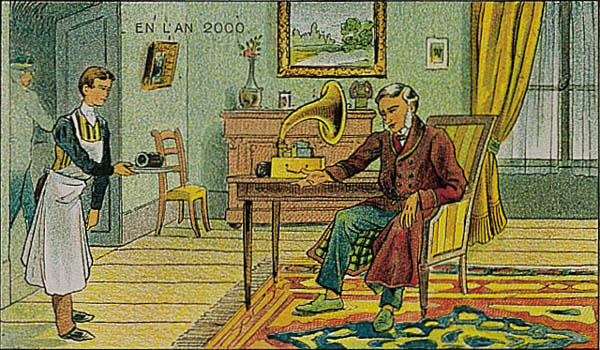

In the near future, we’ll use many different kinds of AI expert systems to explore the stories of the past. Some artificial historians will discover cycles in history – princes assassinating their kingly fathers, for example – that have a higher probability to occur, and will analyze ancient stories accordingly. Other artificial historians will compare genealogies, while yet others will analyze ancient scriptures and identify different patterns of writing. In fact, such an algorithm had already been applied to the Bible, revealing that the Torah has been written by several different authors and distinguishing between them.

The artificial exploration of the past is going to add many fascinating details to stories which we’ve long thought were settled and concluded. But it also raises an important question: when our children and children’s children look back at our present and try to derive meaning from it – what will they find out? How complete will their stories of their past and our present be?

I suspect the stories – the actual knowledge and understanding of the order between events – will be even more complete than what we who dwell in the present know about.

Past-Future

In the not-so-far-away future, machines will be used to analyze all of the world’s data from the early 21st century. This is a massive amount of data: 2.5 quintillion bytes of data are created daily, which would fill ten million blu-ray discs altogether. It is astounding to realize that 90 percent of the world’s data today has been created just in the last two years. Human researchers would not be able to make much sense of it, but advanced AI algorithms – a super-intelligence, in some ways – could actually have the tools to crosslink many different pieces of information together to obtain the story of the present: to find out what movies families had watched on a specific day, in which hotel the President of the United States stayed during a recent visit to France and what snacks he ordered on room service, and many other paraphernalia.

Are those details useless? They may seem so to our limited human comprehension, but they will form the basis for the AI engines to better understand the past, and produce better stories of it. When the people of the future will try to understand how World War 3 broke out, their AI historians may actually conclude that it all began with a presidential case of indigestion which happened at a certain French hotel, and which annoyed the American president so much that it had prevented him from making the most rational choices in the next couple of days. An hypothetical scenario, obviously.

Futuronymity – Maintaining Our Privacy from the Future

We are gaining improved tools to explore the past with, and to derive insights and new knowledge even where information is missing. These tools will be improved further in the future, and will be used to analyze our current times – the early 21st century – as well.

What does it mean for you and me?

Most importantly, we should realize that almost every action you take in the virtual world will be scrutinized by your children’s children, probably after your death. Your actions in the virtual world are recorded all the time, and if the documentation survives into the future, then the next generations are going to know all about your browsing habits in the middle of the night. Yes, even though you turned incognito mode on.

This means we need to develop a new concept for privacy: futuronymity (derived from Future and Anonymity) which will obscure our lives from the eyes of future generations. Politicians are always concerned about this kind of privacy, since they know their critical decisions will be considered and analyzed by historians. In the future, common people will find themselves under similar scrutiny by their progenies. If our current hobby is going to psychologists to understand just how our parents ruined us, then the hobby of our grandchildren will be to go to the computer to find out the same.

Do we even have the right to futuronymity? Should we hide from next generations the truth about how their future was formed, and who was responsible?

That question is no longer in the hands of individuals. In the past, private people could’ve just incinerated their hard drives with all the information on them. Today, most of the information is in the hands of corporations and governments. If we want them to dispose of it – if we want any say in which parts they’ll preserve and which will be deleted – we should speak up now.