A week ago I lectured in front of an exceedingly intelligent group of young people in Israel – “The President’s Scientists and Inventors of the Future”, as they’re called. I decided to talk about the future of robotics and their uses in society, and as an introduction to the lecture I tried to dispel a few myths about robots that I’ve heard repeatedly from older audiences. Perhaps not so surprisingly, the kids were just as disenchanted with these myths as I was. All the same, I’m writing the five robot myths here, for all the ‘old’ people (20+ years old) who are not as well acquainted with technology as our kids.

As a side note: I lectured in front of the Israeli teenagers about the future of robotics, even though I’m currently residing in the United States. That’s another thing robots are good for!

First Myth: Robots must be shaped as Humanoids

Ever since Karel Capek’s first play about robots, the general notion in the public was that robots have to resemble humans in their appearance: two legs, two hands and a head with a brain. Fortunately, most sci-fi authors stop at that point and do not add genitalia as well. The idea that robots have to look just like us is, quite frankly, ridiculous and stems from an overt appreciation of our own form.

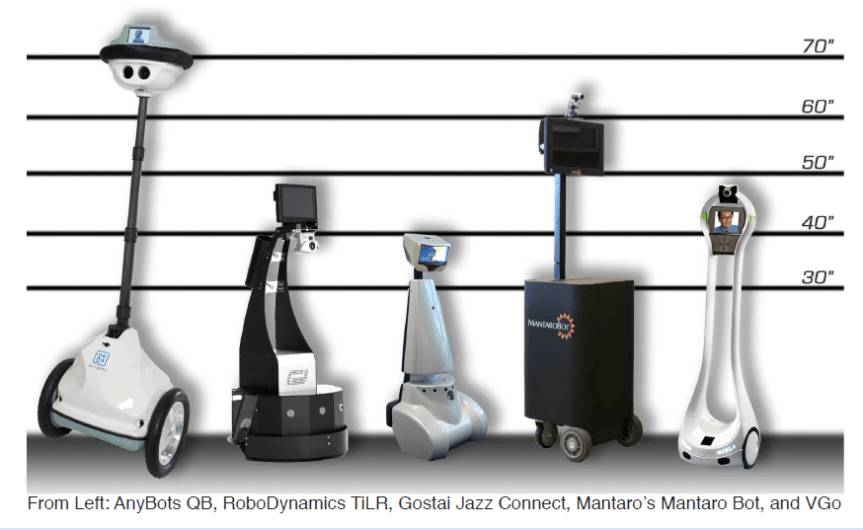

Today, this myth is being dispelled rapidly. Autonomous vehicles – basically robots designed to travel on the roads – obviously look nothing like human beings. Even telepresence robots manufacturers have despaired of notions about robotic arms and legs, and are producing robots that often look more like a broomstick on wheels. Robotic legs are simply too difficult to operate, too costly in energy, and much too fragile with the materials we have today.

Second Myth: Robots have a Computer for a Brain

This myth is interesting in that it’s both true and false. Obviously, robots today are operated by artificial intelligence run on a computer. However, the artificial intelligence itself is vastly different from the simple and rules-dependent ones we’ve had in the past. The state-of-the-art AI engines are based on artificial neural networks: basically a very simple simulation of a small part of a biological brain.

The big breakthrough with artificial neural network came about when Andrew Ng and other researchers in the field showed they could use cheap graphical processing units (GPUs) to run sophisticated simulations of artificial neural networks. Suddenly, artificial neural networks appeared everywhere, for a fraction of their previous price. Today, all the major IT companies are using them, including Google, Facebook, Baidu and others.

Although artificial neural networks were reserved for IT in recent years, they are beginning to direct robot activity as well. By employing artificial neural networks, robots can start making sense of their surroundings, and can even be trained for new tasks by watching human beings do them instead of being programmed manually. In effect, robots employing this new technology can be thought of as having (exceedingly) rudimentary biological brains, and in the next decade can be expected to reach an intelligence level similar to that of a dog or a chimpanzee. We will be able to train them for new tasks simply by instructing them verbally, or even showing them what we mean.

This video clip shows how an artificial neural network AI can ‘solve’ new situations and learn from games, until it gets to a point where it’s better than any human player.

Admittedly, the companies using artificial neural networks today are operating large clusters of GPUs that take up plenty of space and energy to operate. Such clusters cannot be easily placed in a robot’s ‘head’, or wherever its brain is supposed to be. However, this problem is easily solved when the third myth is dispelled.

Third Myth: Robots as Individual Units

This is yet another myth that we see very often in sci-fi. The Terminator, Asimov’s robots, R2D2 – those are all autonomous and individual units, operating by themselves without any connection to The Cloud. Which is hardly surprising, considering there was no information Cloud – or even widely available internet – back in the day when those tales and scripts were written.

Robots in the near future will function much more like a team of ants, than as individual units. Any piece of information that one robot acquires and deems important, will be uploaded to the main servers, analyzed and shared with the other robots as needed. Robots will, in effect, learn from each other in a process that will increase their intelligence, experience and knowledge exponentially over time. Indeed, shared learning will result in an acceleration of AI development rate, since the more robots we have in society – the smarter they will become. And the smarter they will become – the more we will want to assimilate them in our daily lives.

The Tesla cars are a good example for this sort of mutual learning and knowledge sharing. In the words of Elon Musk, Tesla’s CEO –

“The whole Tesla fleet operates as a network. When one car learns something, they all learn it.”

Fourth Myth: Robots can’t make Moral Decisions

In my experience, many people still adhere to this myth, under the belief that robots do not have consciousness, and thus cannot make moral decisions. This is a false correlation: I can easily program an autonomous vehicle to stop before hitting human beings on the road, even without the vehicle enjoying any kind of consciousness. Moral behavior, in this case, is the product of programming.

Things get complicated when we realize that autonomous vehicles, in particular, will have to make novel moral decisions that no human being was ever required to make in the past. What should an autonomous vehicle do, for example, when it loses control over its brakes, and finds itself rushing to collision with a man crossing the road? Obviously, it should veer to the side of the road and hit the wall. But what should it do if it calculates that its ‘driver’ will be killed as a result of the collision into the wall? Who is more important in this case? And what happens if two people cross the road instead of one? What if one of those people is a pregnant woman?

These questions demonstrate that it is hardly enough to program an autonomous vehicle for specific encounters. Rather, we need to program into it (or train it to obey) a set of moral rules – heuristics – according to which the robot will interpret any new occurrence and reach a decision accordingly.

And so, robots must make moral decisions.

Conclusion

As I wrote in the beginning of this post, the youth and the ‘techies’ are already aware of how out-of-date these myths are. Nobody as yet, though, knows where the new capabilities of robots will take us when they are combined together. What will our society look like, when robots are everywhere, sharing their intelligence, learning from everything they see and hear, and making moral decisions not from an individual unit perception (as we human beings do), but from an overarching perception spanning insights and data from millions of units at the same time?

This is the way we are heading to – a super-intelligence composed of a combination of incredibly sophisticated AI, with robots as its eyes, ears and fingertips. It’s a frightening future, to be sure. How could we possibly control such a super-intelligence?

That’s a topic for a future post. In the meantime, let me know if there are any other myths about robots you think it’s time to ditch!