It all began in a horribly innocent fashion, as such things often do. The Center for Middle East Studies in Brown University, near my home, has held a “public discussion” about the futures of Palestinians in Israel. Naturally, as a Israeli living in the States, I’m still very much interested in this area, so I took a look at the panelist list and discovered immediately they all came from the same background and with the same point of view: Israel was the colonialist oppressor and that was pretty much all there was to it in their view.

Quite frankly, this seemed bizarre to me: how can you have a discussion about the future of a people in a region, without understanding the complexities of their geopolitical situation? How can you talk about the future in a war-torn region like the Middle East, when nobody speaks about security issues, or provides the state of mind of the Israeli citizens or government? In short, how can you have a discussion when all the panelists say exactly the same thing?

So I decided to do something about it, and therein lies my downfall.

I am the proud co-founder of TeleBuddy – a robotics services start-up company that operates telepresence robots worldwide. If you want to reach somewhere far away – Israel, California, or even China – we can place a robot there so that instead of wasting time and health flying, you can just log into the robot and be there immediately. We mainly use Double Robotics‘ robots, and since I had one free for use, I immediately thought we could use the robots to bring a representative of the Israeli point of view to the panel – in a robotic body.

Things began moving in a blur from that point. I obtained permission from Prof. Beshara Doumani, who organized the panel, to bring a robot to the place. StandWithUs – an organization that disseminates information about Israel in the United States – has graciously agreed to send a representative by the name of Shahar Azani to log into the robot, and so it happened that I came to the event with possibly the first ever robotic-diplomat.

Things went very well in the event itself. While my robotic friend was not allowed to speak from the stage, he talked with people in the venue before the event began, and had plenty of fun. Some of the people in the event seemed excited about the robot. Others were reluctant to approach him, so he talked with other people instead. The entire thing was very civil, as other participants in the panel later remarked. I really thought we found a good use for the robot, and even suggested to the organizers that next time they could use TeleBuddy’s robots to ‘teleport’ a different representative – maybe a Palestinian – to their event. I went home happily, feeling I made just a little bit of a difference in the world and contributed to an actual discussion between the two sides in a conflict.

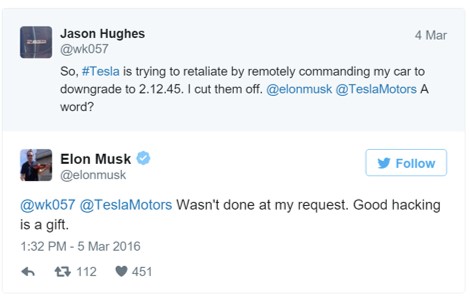

A few days later, Open Hillel published a statement about the event, as follows –

“In a dystopian twist, the latest development in the attack on open discourse by right-wing pro-Israel groups appears to be the use of robots to police academic discourse. At a March 3, 2016 event about Palestinian citizens of Israel sponsored by Middle East Studies at Brown University, a robot attended and accosted students. The robot used an iPad to display a man from StandWithUs, which receives funding from Israel’s government.

…

Before the event began, students say, the robot approached students and harassed them about why they were attending the event. Students declined to engage with this bizarre form of intimidation and ignored the robot. At the event itself, the robot and the StandWithUs affiliate remained in the back. During the question and answer session, the man briefly left the robot’s side to ask a question.

…

It is not yet known whether this was the first use of a robot to monitor Israel-Palestine discourse on campus. … Open Hillel opposes the attempts of groups like StandWithUs to monitor students and faculty. As a student-led grassroots campaign supported by young alumni, professors, and rabbis, Open Hillel rejects any attempt to stifle or target student or faculty activists. The use of robots for purposes of surveillance endangers the ability of students and faculty to learn and discuss this issue. We call upon outside groups such as StandWithUs to conduct themselves in accordance with the academic principles of open discourse and debate.”

I later met accidentally with some of the students who were in the event, and asked them why they believed the robot was used for surveillance, or to harass students. In return, they accused me of being a spy for the Israeli government. Why? Obviously, because I operated a “surveillance drone” on American soil. That’s perfect circular logic.

Lessons

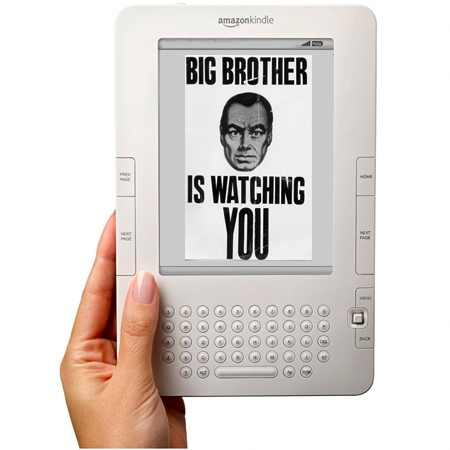

There are lessons aplenty to be obtained from this bizarre incident, but the one that strikes me in particular is that you can’t easily ignore existing cultural sentiments and paradigms without taking a hit in the process. The robot was obviously not a surveillance drone, or meant for surveillance of any kind, but Open Hillel managed to rebrand it by relying on fears that have deep-roots in the American public. They did it to promote their own goals of getting some PR, and they did it so skillfully that I can’t help but applaud them for it. Quite frankly, I wish their PR guys were working for me.

That said, there are issues here that need to be dealt with if telepresence robots ever want to become part of critical discussions. The fear that the robot may be recording or taking pictures in an event is justified – a tech-savvy person controlling the robot could certainly find a way to do that. However, I can’t help but feel that there are less-clever ways to accomplish that, such as using one’s smartphone, or the covert Memoto Lifelogging camera. If you fear being recorded on public, you should know that telepresence robots are probably the least of your concerns.

Conclusions

The honest truth is that this is a brand new field for everyone involved. How should robots behave at conferences? Nobody knows. How should they talk with human beings at panels or public events? Nobody can tell yet. How can we make human beings feel more comfortable when they are in the same perimeter with a suit-wearing robot that can potentially record everything it sees? Nobody has any clue whatsoever.

These issues should be taken into consideration in any venture to involve robots in the public sphere.

It seems to me that we need some kind of a standard, to be developed in a collaboration between ethicists, social scientists and roboticists, which will ensure a high level of data encryption for telepresence robots and an assurance that any data collected by the robot will be deleted on the spot.

We need, in short, to develop proper robotic etiquette.

And if we fail to do that, then it shouldn’t really surprise anyone when telepresence robots are branded as “surveillance drones” used by Zionist spies.