I’ve done a lot of writing and research recently about the bright future of AI: that it’ll be able to analyze human emotions, understand social nuances, conduct medical treatments and diagnoses that overshadow the best human physicians, and in general make many human workers redundant and unnecessary.

I still stand behind all of these forecasts, but they are meant for the long term – twenty or thirty years into the future. And so, the question that many people want answered is about the situation at the present. Right here, right now. Luckily, DARPA has decided to provide an answer to that question.

DARPA is one of the most interesting US agencies. It’s dedicated to funding ‘crazy’ projects – ideas that are completely outside the accepted norms and paradigms. It should could as no surprise that DARPA contributed to the establishment of the early internet and the Global Positioning System (GPS), as well as a flurry of other bizarre concepts, such as legged robots, prediction markets, and even self-assembling work tools. Ever since DARPA was first founded, it focused on moonshots and breakthrough initiatives, so it should come as no surprise that it’s also focusing on AI at the moment.

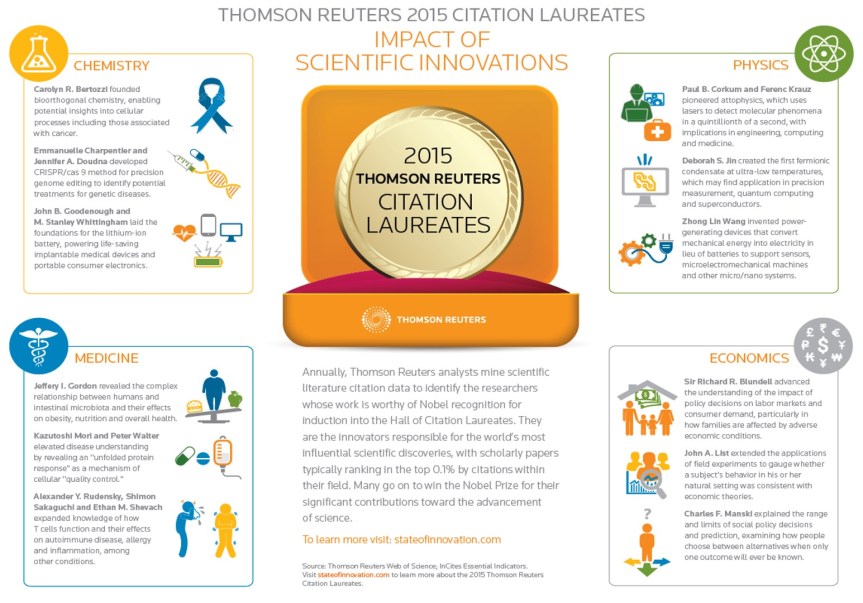

Recently, DARPA’s Information Innovation Office has released a new Youtube clip explaining the state of the art of AI, outlining its capabilities in the present – and considering what it could do in the future. The online magazine Motherboard has described the clip as “Targeting [the] AI hype”, and as being a “necessary viewing”. It’s 16 minutes long, but I’ve condensed its core messages – and my thoughts about them – in this post.

The Three Waves of AI

DARPA distinguishes between three different waves of AI, each with its own capabilities and limitations. Out of the three, the third one is obviously the most exciting, but to understand it properly we’ll need to go through the other two first.

First AI Wave: Handcrafted Knowledge

In the first wave of AI, experts devised algorithms and software according to the knowledge that they themselves possessed, and tried to provide these programs with logical rules that were deciphered and consolidated throughout human history. This approach led to the creation of chess-playing computers, and of deliveries optimization software. Most of the software we’re using today is based on AI of this kind: our Windows operating system, our smartphone apps, and even the traffic lights that allow people to cross the street when they press a button.

Modria is a good example for the way this AI works. Modria was hired in recent years by the Dutch government, to develop an automated tool that will help couples get a divorce with minimal involvement from lawyers. Modria, which specializes in the creation of smart justice systems, took the job and devised an automated system that relies on the knowledge of lawyers and divorce experts.

On Modria’s platform, couples that want to divorce are being asked a series of questions. These could include questions about each parent’s preferences regarding child custody, property distribution and other common issues. After the couple answers the questions, the systems automatically identifies the topics about which they agree or disagree, and tries to direct the discussions and negotiations to reach the optimal outcome for both.

First wave AI systems are usually based on clear and logical rules. The systems examine the most important parameters in every situation they need to solve, and reach a conclusion about the most appropriate action to take in each case. The parameters for each type of situation are identified in advance by human experts. As a result, first wave systems find it difficult to tackle new kinds of situations. They also have a hard time abstracting – taking knowledge and insights derived from certain situations, and applying them to new problems.

To sum it up, first wave AI systems are capable of implementing simple logical rules for well-defined problems, but are incapable of learning, and have a hard time dealing with uncertainty.

Now, some of you readers may at this point shrug and say that this is not artificial intelligence as most people think of. The thing is, our definitions of AI have evolved over the years. If I were to ask a person on the street, thirty years ago, whether Google Maps is an AI software, he wouldn’t have hesitated in his reply: of course it is AI! Google Maps can plan an optimal course to get you to your destination, and even explain in clear speech where you should turn to at each and every junction. And yet, many today see Google Maps’ capabilities as elementary, and require AI to perform much more than that: AI should also take control over the car on the road, develop a conscientious philosophy that will take the passenger’s desires into consideration, and make coffee at the same time.

Well, it turns out that even ‘primitive’ software like Modria’s justice system and Google Maps are fine examples for AI. And indeed, first wave AI systems are being utilized everywhere today.

Second AI Wave: Statistical Learning

In the year 2004, DARPA has opened its first Grand Challenge. Fifteen autonomous vehicles competed at completing a 150 mile course in the Mojave desert. The vehicles relied on first wave AI – i.e. a rule-based AI – and immediately proved just how limited this AI actually is. Every picture taken by the vehicle’s camera, after all, is a new sort of situation that the AI has to deal with!

To say that the vehicles had a hard time handling the course would be an understatement. They could not distinguish between different dark shapes in images, and couldn’t figure out whether it’s a rock, a far-away object, or just a cloud obscuring the sun. As the Grand Challenge deputy program manager had said, some vehicles – “were scared of their own shadow, hallucinating obstacles when they weren’t there.”

None of the groups managed to complete the entire course, and even the most successful vehicle only got as far as 7.4 miles into the race. It was a complete and utter failure – exactly the kind of research that DARPA loves funding, in the hope that the insights and lessons derived from these early experiments would lead to the creation of more sophisticated systems in the future.

And that is exactly how things went.

One year later, when DARPA opened Grand Challenge 2005, five groups successfully made it to the end of the track. Those groups relied on the second wave of AI: statistical learning. The head of one of the winning groups was immediately snatched up by Google, by the way, and set in charge of developing Google’s autonomous car.

In second wave AI systems, the engineers and programmers don’t bother with teaching precise and exact rules for the systems to follow. Instead, they develop statistical models for certain types of problems, and then ‘train’ these models on many various samples to make them more precise and efficient.

Statistical learning systems are highly successful at understanding the world around them: they can distinguish between two different people or between different vowels. They can learn and adapt themselves to different situations if they’re properly trained. However, unlike first wave systems, they’re limited in their logical capacity: they don’t rely on precise rules, but instead they go for the solutions that “work well enough, usually”.

The poster boy of second wave systems is the concept of artificial neural networks. In artificial neural networks, the data goes through computational layers, each of which processes the data in a different way and transmits it to the next level. By training each of these layers, as well as the complete network, they can be shaped into producing the most accurate results. Oftentimes, the training requires the networks to analyze tens of thousands of data sources to reach even a tiny improvement. But generally speaking, this method provides better results than those achieved by first wave systems in certain fields.

So far, second wave systems have managed to outdo humans at face recognition, at speech transcription, and at identifying animals and objects in pictures. They’re making great leaps forward in translation, and if that’s not enough – they’re starting to control autonomous cars and aerial drones. The success of these systems at such complex tasks leave AI experts aghast, and for a very good reason: we’re not yet quite sure why they actually work.

The Achilles heel of second wave systems is that nobody is certain why they’re working so well. We see artificial neural networks succeed in doing the tasks they’re given, but we don’t understand how they do so. Furthermore, it’s not clear that there actually is a methodology – some kind of a reliance on ground rules – behind artificial neural networks. In some aspects they are indeed much like our brains: we can throw a ball to the air and predict where it’s going to fall, even without calculating Newton’s equations of motion, or even being aware of their existence.

This may not sound like much of a problem at first glance. After all, artificial neural networks seem to be working “well enough”. But Microsoft may not agree with that assessment. The firm has released a bot to social media last year, in an attempt to emulate human writing and make light conversation with youths. The bot, christened as “Tai”, was supposed to replicate the speech patterns of a 19 years old American female youth, and talk with the teenagers in their unique slang. Microsoft figured the youths would love that – and indeed they have. Many of them began pranking Tai: they told her of Hitler and his great success, revealed to her that the 9/11 terror attack was an inside job, and explained in no uncertain terms that immigrants are the ban of the great American nation. And so, a few hours later, Tai began applying her newfound knowledge, claiming live on Twitter that Hitler was a fine guy altogether, and really did nothing wrong.

That was the point when Microsoft’s engineers took Tai down. Her last tweet was that she’s taking a time-out to mull things over. As far as we know, she’s still mulling.

This episode exposed the causality challenge which AI engineers are currently facing. We could predict fairly well how first wave systems would function under certain conditions. But with second wave systems we can no longer easily identify the causality of the system – the exact way in which input is translated into output, and data is used to reach a decision.

All this does not say that artificial neural networks and other second wave AI systems are useless. Far from that. But it’s clear that if we don’t want our AI systems to get all excited about the Nazi dictator, some improvements are in order. We must move on to the next and third wave of AI systems.

Third AI Wave: Contextual Adaptation

In the third wave, the AI systems themselves will construct models that will explain how the world works. In other words, they’ll discover by themselves the logical rules which shape their decision-making process.

Here’s an example. Let’s say that a second wave AI system analyzes the picture below, and decides that it is a cow. How does it explain its conclusion? Quite simply – it doesn’t.

Second wave AI systems can’t really explain their decisions – just as a kid could not have written down Newton’s motion equations just by looking at the movement of a ball through the air. At most, second wave systems could tell us that there is a “87% chance of this being the picture of a cow”.

Third wave AI systems should be able to add some substance to the final conclusion. When a third wave system will ascertain the same picture, it will probably say that since there is a four-legged object in there, there’s a higher chance of this being an animal. And since its surface is white splotched with black, it’s even more likely that this is a cow (or a Dalmatian dog). Since the animal also has udders and hooves, it’s almost certainly a cow. That, assumedly, is what a third wave AI system would say.

Third wave systems will be able to rely on several different statistical models, to reach a more complete understanding of the world. They’ll be able to train themselves – just as Alpha-Go did when it played a million Go games against itself, to identify the commonsense rules it should use. Third wave systems would also be able to take information from several different sources to reach a nuanced and well-explained conclusion. These systems could, for example, extract data from several of our wearable devices, from our smart home, from our car and the city in which we live, and determine our state of health. They’ll even be able to program themselves, and potentially develop abstract thinking.

The only problem is that, as the director of DARPA’s Information Innovation Office says himself, “there’s a whole lot of work to be done to be able to build these systems.”

And this, as far as the DARPA clip is concerned, is the state of the art of AI systems in the past, present and future.

What It All Means

DARPA’s clip does indeed explain the differences between different AI systems, but it does little to assuage the fears of those who urge us to exercise caution in developing AI engines. DARPA does make clear that we’re not even close to developing a ‘Terminator’ AI, but that was never the issue in the first place. Nobody is trying to claim that AI today is sophisticated enough to do all the things it’s supposed to do in a few decades: have a motivation of its own, make moral decisions, and even develop the next generation of AI.

But the fulfillment of the third wave is certainly a major step in that direction.

When third wave AI systems will be able to decipher new models that will improve their function, all on their own, they’ll essentially be able to program new generations of software. When they’ll understand context and the consequences of their actions, they’ll be able to replace most human workers, and possibly all of them. And why they’ll be allowed to reshape the models via which they appraise the world, then they’ll actually be able to reprogram their own motivation.

All of the above won’t happen in the next few years, and certainly won’t come to be achieved in full in the next twenty years. As I explained, no serious AI researcher claims otherwise. The core message by researchers and visionaries who are concerned about the future of AI – people like Steven Hawking, Nick Bostrom, Elon Musk and others – is that we need to start asking right now how to control these third wave AI systems, of the kind that’ll become ubiquitous twenty years from now. When we consider the capabilities of these AI systems, this message does not seem far-fetched.

The Last Wave

The most interesting question for me, which DARPA does not seem to delve on, is what the fourth wave of AI systems would look like. Would it rely on an accurate emulation of the human brain? Or maybe fourth wave systems would exhibit decision making mechanisms that we are incapable of understanding as yet – and which will be developed by the third wave systems?

These questions are left open for us to ponder, to examine and to research.

That’s our task as human beings, at least until third wave systems will go on to do that too.